Gaussian is a computational chemistry program available to students, staff and faculty. See accessing the software share for more information.

Installation Instructions

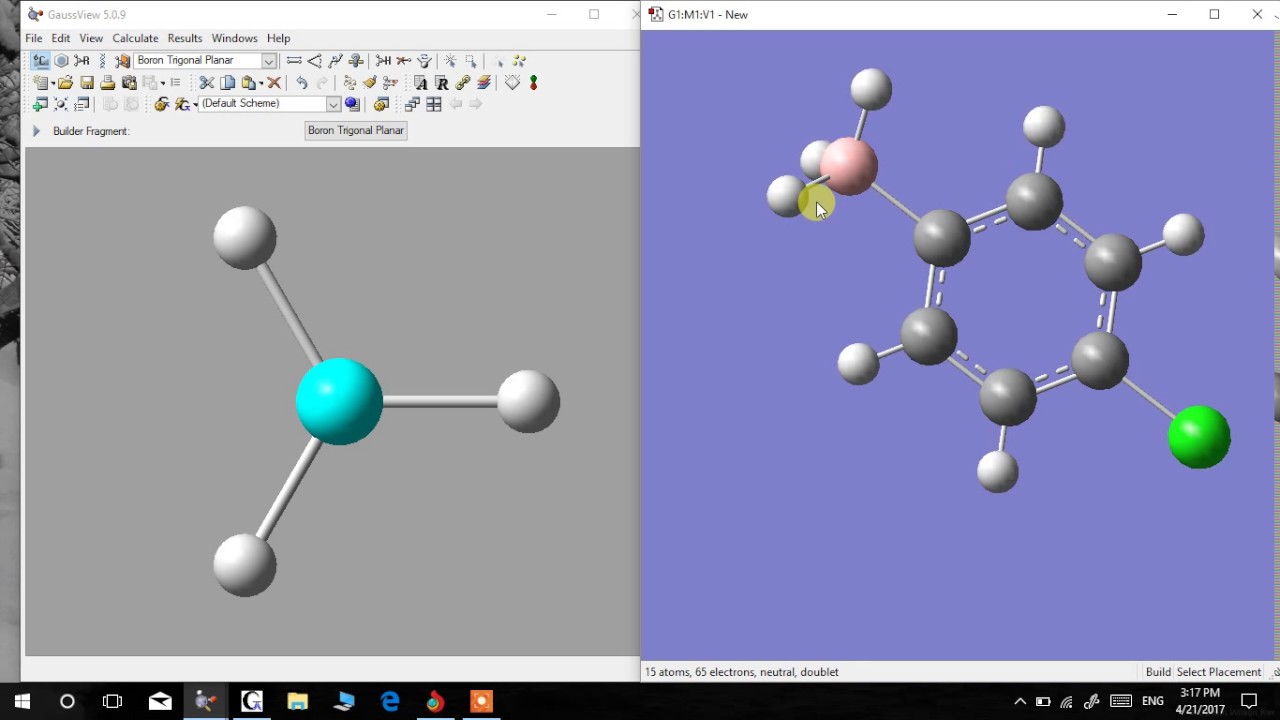

- Frontier electron density (FED) was obtained using a Gaussian 09 program (Frisch et al., 2009). Molecular orbital calculations were conducted via the B3LYP/6-311 G (d, p) level, with the optimal.

- General overview of my workflow when using G09. This can be used a tutorial and example files can be found at: https://github.com/gclen/gaussianfiles.

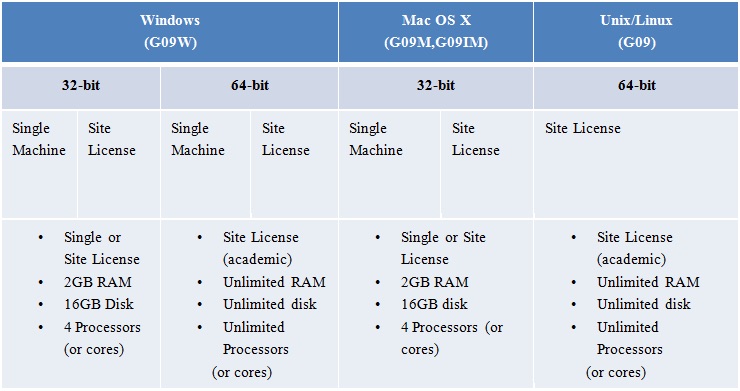

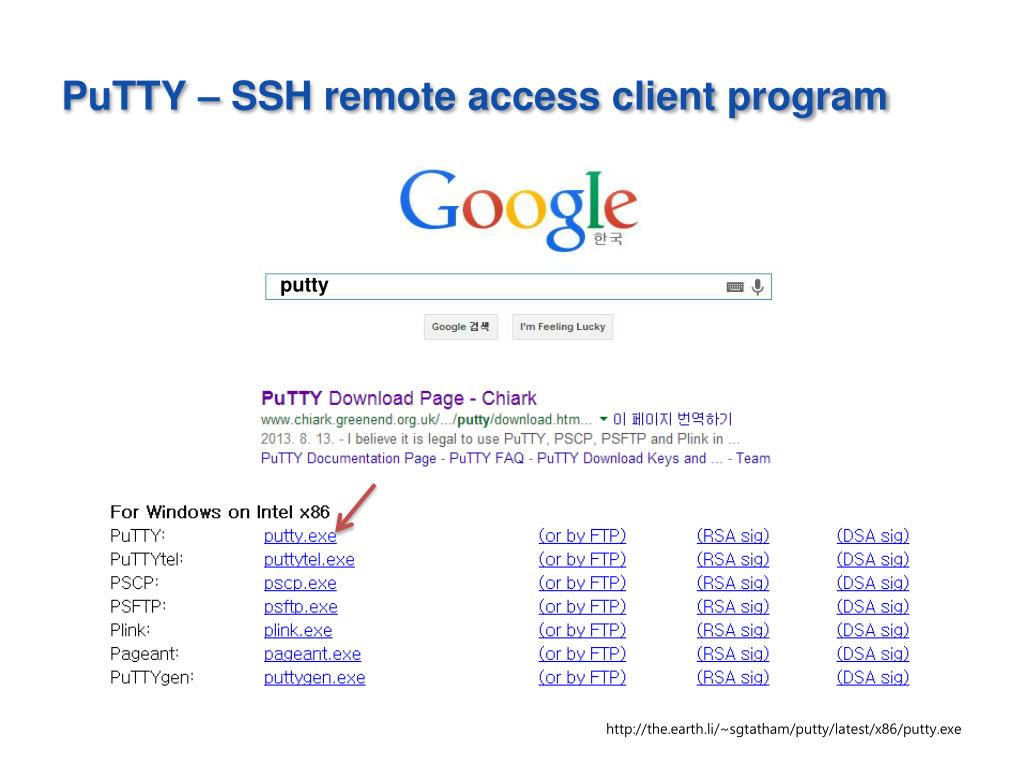

1. In the Gaussian folder on cheme-software, select the most recent edition of Gaussian (currently Gaussian 09 Rev D.01) and open that folder. Then open the folder Gaussian 09 folders and, in there, select the appropriate version for your operating system. In order to do this, you must know if you are running Linux, Mac OS X, or Windows, and you must know if your windows installation is 64 or 32 bit. If you are unsure, go to Start. Select Control Panel, and then System. Look under system type. Most CMU computers run 64 bit windows, and the appropriate version of Gaussian for this is G09W_64-bit_binary.

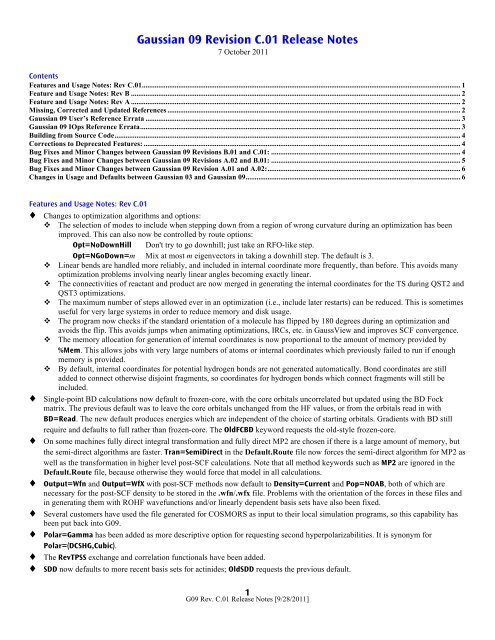

Last updated on: 05 January 2017. Basis Sets; Density Functional (DFT) Methods; Solvents List SCRF. The Gaussian 09 license prohibits the publication of comparative benchmark data. A SLURM script must be used in order to run a Gaussian09 job. HPCC main cluster Penzias supports Gaussian jobs demanding up to 64GB of memory.

2. In the appropriate folder for your operating system, open the application called setup. If you recieve a security warning, click run.

3. click Next to begin installation.

4. You should now be prompted for your name, company, and serial number. Enter your name, Carnegie Mellon University, and the serial number from the file serial number.txt in the same folder you found setup in. Click next, and you should see a pop-up that says serial number validated. Click ok.

5. Select components of Gaussian to install (all are reccommended), then click Next.

6. Select a folder in which to install Gaussian (the default folder is reccommended), then click Next.

7. Click Install.

8. A pop-up should eventually appear. Click ok.

9. Click Finish.

Gaussian Naive Bayes (GaussianNB)

Can perform online updates to model parameters via partial_fit.For details on algorithm used to update feature means and variance online,see Stanford CS tech report STAN-CS-79-773 by Chan, Golub, and LeVeque:

Read more in the User Guide.

Prior probabilities of the classes. If specified the priors are notadjusted according to the data.

Gaussian 09 Reference

Portion of the largest variance of all features that is added tovariances for calculation stability.

number of training samples observed in each class.

probability of each class.

class labels known to the classifier

absolute additive value to variances

variance of each feature per class

mean of each feature per class

Examples

Methods

| Fit Gaussian Naive Bayes according to X, y |

| Get parameters for this estimator. |

| Incremental fit on a batch of samples. |

| Perform classification on an array of test vectors X. |

| Return log-probability estimates for the test vector X. |

| Return probability estimates for the test vector X. |

| Return the mean accuracy on the given test data and labels. |

| Set the parameters of this estimator. |

fit(X, y, sample_weight=None)[source]¶Fit Gaussian Naive Bayes according to X, y

Training vectors, where n_samples is the number of samplesand n_features is the number of features.

Target values.

Weights applied to individual samples (1. for unweighted).

New in version 0.17: Gaussian Naive Bayes supports fitting with sample_weight.

- selfobject

get_params(deep=True)[source]¶Get parameters for this estimator.

If True, will return the parameters for this estimator andcontained subobjects that are estimators.

Parameter names mapped to their values.

partial_fit(X, y, classes=None, sample_weight=None)[source]¶Incremental fit on a batch of samples.

This method is expected to be called several times consecutivelyon different chunks of a dataset so as to implement out-of-coreor online learning.

This is especially useful when the whole dataset is too big to fit inmemory at once.

This method has some performance and numerical stability overhead,hence it is better to call partial_fit on chunks of data that areas large as possible (as long as fitting in the memory budget) tohide the overhead.

Training vectors, where n_samples is the number of samples andn_features is the number of features.

Target values.

Gaussian 16

List of all the classes that can possibly appear in the y vector.

Must be provided at the first call to partial_fit, can be omittedin subsequent calls.

Weights applied to individual samples (1. for unweighted).

- selfobject

predict(X)[source]¶Perform classification on an array of test vectors X.

- Xarray-like of shape (n_samples, n_features)

Predicted target values for X

predict_log_proba(X)[source]¶Return log-probability estimates for the test vector X.

- Xarray-like of shape (n_samples, n_features)

Returns the log-probability of the samples for each class inthe model. The columns correspond to the classes in sortedorder, as they appear in the attribute classes_.

predict_proba(X)[source]¶Return probability estimates for the test vector X.

- Xarray-like of shape (n_samples, n_features)

Gaussian 09

Returns the probability of the samples for each class inthe model. The columns correspond to the classes in sortedorder, as they appear in the attribute classes_.

score(X, y, sample_weight=None)[source]¶Gaussian 09 Tutorial

Return the mean accuracy on the given test data and labels.

In multi-label classification, this is the subset accuracywhich is a harsh metric since you require for each sample thateach label set be correctly predicted.

Test samples.

True labels for X.

Sample weights.

Mean accuracy of self.predict(X) wrt. y.

set_params(**params)[source]¶Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects(such as Pipeline). The latter haveparameters of the form <component>__<parameter> so that it’spossible to update each component of a nested object.

Gaussian 09 Basis Sets

Estimator parameters.

Estimator instance.